We recently upgraded our monolith application from Rails 6.0 to Rails 6.1. By evaluating our prior experience on Rails upgrades, we have streamlined the process and we want to share it with you.

In this post we are going to give some insights on our workflow, from organizing such a milestone to actually delivering it without blocking an engineering team of more than 160 developers, building an application that peaks at more than 100k requests per minute.

Introduction

The core application of Skroutz is a large Rails monolith heavily utilizing MariaDB, MongoDB, Elasticsearch, Kafka, Redis and Memcached. We also use Jenkins for our CI and various tools like Sentry, NewRelic and Grafana for monitoring.

Even though we were upgrading to a minor version, Rails 6.1 introduced a notable amount of changes affecting many parts of our codebase and the aforementioned components.

We will describe the process we followed including some key points that allowed us to have a smooth release (such as our deprecation handling mechanism, how we approached backportable and non-backportable changes, canary deployment and more).

Organizing the upgrade

Spending time and resources to properly organize such a milestone is crucial for a successful delivery so we started with brainstorming and discussions on the following three questions: who, how and when.

Who

We have a core team named Kernel that among others, is responsible for keeping the application healthy, modern and productive.

Although the whole upgrade was driven by this team, all Skroutz’s teams were involved with much of the work to be done. Why?

-

Share the knowledge

With every upgrade, new things become available, some things start working in a different way than before and some others are no longer there.

Having engineers directly working on these changes, familiarizes themselves more effectively than just reading a changelog of the new version. Additionally, the gained knowledge is much more easily and directly communicated to other members of their team.

-

Cross team work is beneficial in many ways

This is a very good opportunity for engineers to

- familiarize themselves with sections of the codebase that don’t belong to their domain

- meet and work with engineers outside of their team

- exchange knowledge, share tips, hacks and cat photos :P

-

Speed up the process

It’s much easier and productive to investigate problems and proceed to changes in specific code sections by the team that owns it.

At Skroutz we have organized the engineering team under product groups with each group consisting of a handful of teams.

For the upgrade process, each product group assigned to one of its members the role of the Contact Person with the following responsibilities:

-

Single point of reference

Address any requests for help or information coming from the Core team.

-

Delegation

Work directly to address a group’s issue regarding the upgrade or pass it on to the proper member of the group.

-

Sync

Stay up to date with the status of the upgrade, communicate developments affecting the group’s pipeline, raise the flag and request for help in case of delays or blocking items.

How

For a milestone of this size, effective communication and task breakdown is critical.

Tracking

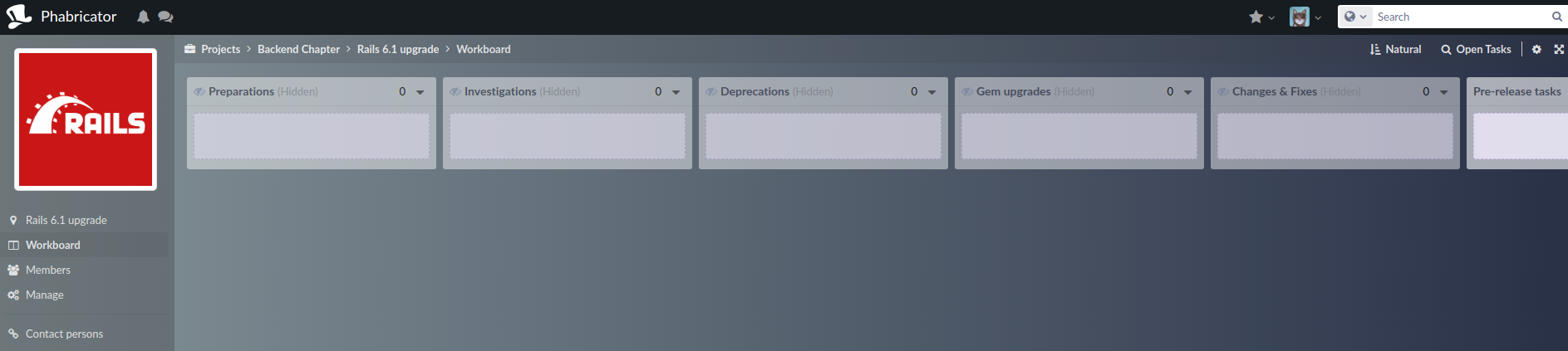

Since upgrading Rails is a recurring task, we use a dedicated project in our tracking system and we create milestones for each explicit upgrade.

The workboard contains columns categorizing the tasks based on their nature, so we can easily have a good overview of the state of the upgrade process, what’s left to be done, what’s blocked etc.

The nature of the tasks varies for each application but the following categories should be pretty common for everyone.

-

Preparations

Tasks for preparing the upgrade process before the actual work starts - find more in the Preparations section below

-

Investigations

Tasks for items that need investigation, for example checking if there is a version compatible with the target Rails version for a specific gem or check if the CI needs modifications to play well with the new version etc.

-

Deprecations

Tasks for complying with suggestions deriving from Rails active support deprecations for the target version - find more in the Deprecations section below

-

Gem updates

Tasks for updating internal or external gems to their new Rails compatible version

-

Changes & Fixes

This category contains all the tasks that actually make the codebase compatible with the new Rails version. Most commonly, these tasks involve fixing bugs due to changes that were not resolved by the deprecations or modifying code to use a newly introduced Rails feature.

-

Pre-release tasks

Tasks for actions that need to be done after everything seems to be in place and before the actual release (such as smoke tests, create the deploy plan etc.)

-

Post-release

Task for actions that need to be taken after the new version release - that could be cleanups, monitor the performance etc.

Communication

We created a Slack channel joined by the Core Team, the Group Contact Persons and any other engineer interested in the upgrade and we set up our tracking system to publish notifications of the Rails upgrade milestone to it.

Having a dedicated place for communication had many benefits:

- Anything related to the upgrade was shared at the channel - the information was not being spread to emails, private conversations or other communication channels. We didn’t have to remember what was discussed and where, everything was available and discoverable in a single place - we could revisit the channel at any time in the future and find what we’re looking for.

- Every member was constantly in sync with the upgrade developments - any accomplishments, resolutions, blocking factors or discussions were communicated to the channel - even if someone got involved at a later phase of the milestone, the information was there.

- Something that possibly affected a specific group’s code area was visible to any member of the channel - everyone could contribute and familiarize themselves with almost all introduced changes of the upgrade.

Given the above, the “How” could be summed up to:

- The upgrade has to be well broken down in tasks on the milestone workboard in the tracking system - when everything is resolved, we’re ready for the release.

- Whatever we need - help, raise a flag, share a finding - use the Slack channel and let the discussion begin.

When

Even though planning a Rails upgrade is hard and can easily go off track, there are one or two things that can help us accomplish it in a safer manner.

Cross team work

The upgrade should be a cross team work.

Our Core team had this task already in its pipeline but involving other teams, having their own planning, at the last minute would not work. To avoid this, we had to evaluate the required effort and how it is distributed to the other teams’ components early in the process - three months before the date we wanted the release to take place.

Take baby steps - don’t jump at once

Having a well tested application with a green CI build doesn’t mean that everything will be fine once we go live. There are many things that could go wrong - from degraded performance to bugs showing up only in production - and the sooner we learn about it the better it is.

Upgrading an application to a newer Rails version, usually means:

- update the gems to a compatible version

- modify the codebase to conform with the new conventions

- change previously deprecated mechanisms to the suggested ones (for example, the

dalli_storethat doesn’t play well in Rails 6.1 and can be replaced by themem_cache_storeimplementation as suggested by both Rails and the Dalli gem)

Instead of packing all of the above in a single deployment, we isolated any backwards compatible changes and shipped them as soon as possible in the current Rails version.

Preparation

We decided how we will organize the upgrade. Time to start preparing it - we couldn’t just shout out to Slack “Hey everybody, start upgrading the application”.

As previously mentioned, we wanted to measure the effort and break it down efficiently in tasks. How do we do this though?

Changelogs

Obviously, the first step was to read the changelogs to get an idea of what is changing in the new version. Besides learning of new features that our application could use, this step is also crucial to easier understand and resolve any failures that will show up later on during the upgrade process.

But. There will be a lot of changelog entries for which it’s not very obvious how they affect our application.

This one for example:

Fix complicated has_many :through with nested where condition

Does this mean that we are already affected by this bug in our current version? If yes, are we already using a workaround?

Since we are talking about a monolith built by a multi-member engineering team, someone can’t know every single bit of it. They can’t answer the questions above unless they actually coded something that revealed this specific bug. But even in this case, what about the rest of the changelog entries?

So, after this step, what will help us get a better idea of what is going on is to take a look at our CI. How many failures do we have in the new version? But there’s a prerequisite for that step. Updating our gems…

At this point, we should create a branch (we named ours rails-upgrade-main) in which we will start adding the commits that will be merged to our main branch when we will be ready to ship the upgrade.

Gems update

It would be so great if we could just change the Rails’ gem version in the Gemfile, run bundle and get the green message.

But that’s pretty uncommon. A monolith usually comes with a Gemfile full of dependencies and it’s almost certain that you’ll have to upgrade some or many of them to a version compatible with the target Rails version.

So, after changing the Rails version in the Gemfile, we run bundle update rails and start resolving any failures that arise due to other gem incompatibilities.

This usually means that we have to

- visit the gem’s homepage to locate the appropriate version

- read the changelogs and check if the changes affect the gem’s usages in our codebase

We use Appraisal in all of our internal gems and testing their compatibility with the Rails version was as simple as creating a new appraisal definition and making sure that the tests are green. In most cases, all we had to do was to extend their Rails dependency to include the new one.

A very good practice here is to check if the new gem’s version is also compatible with the current Rails version. If yes, then this version bump should be brought to the main branch and deployed early. This will allow us to identify and deal with gem issues incrementally and gem by gem instead of dealing with all of them upon the upgrade release. So, instead of pushing the gem version bumps to the rails-upgrade-main branch, use the main branch and ship them one by one or as we see fit.

Ideally, from this step, the rails-upgrade-main branch should contain only the commit that bumps the rails gem version to the target one.

rake app:update

So, we have a branch whose rails version is the target one and we can bundle successfully.

At this point, we need to execute the rake app:update task as noted here and

also configure the framework defaults.

The new Rails version might have different configuration defaults than the previous version. However, after following the steps described above, your application would still run with configuration defaults from the previous Rails version. That’s because the value for config.load_defaults in config/application.rb has not been changed yet.

We follow the interactive session and proceed based on our application’s setup. At the end we should carefully review the changes, especially those related to the new version’s defaults, and commit them in the rails-upgrade-main branch.

Test Suite

We have successfully bundled, we adapted to the new version’s configuration and we want to run the test suite to see what’s going on.

Extensively testing our application makes milestones like the Rails upgrade much safer and gives us more confidence that everything will be fine.

In our application, we have ~75k RSpec examples and we have set up our CI to distribute them in a group of servers ending up decreasing the duration of the sequential run from hours to just 15minutes.

Our first execution finished with more than 1.5k failures. Even though this seemed kind of disappointing, we knew what the root cause along with the fix for the majority of them was. Deprecations :)

Deprecations

Rails comes with a deprecation API, ActiveSupport::Deprecations, and every framework component like ActiveRecord, uses it to inform for usages that are deprecated and subject to removal, replacement or change in a next version release (in most cases the warnings include a suggestion on how to deal with it).

At Skroutz, we have set up this deprecation mechanism to work along with Rails’ instrumentation API, ActiveSupport::Notifications.

Instead of raising an error or just logging a deprecation, we configured all of our environments to notify in case of a deprecation

config.active_support.deprecation = :notify

and in an initializer we subscribed to the related event in order to implement our deprecation handling.

ActiveSupport::Notifications.subscribe('deprecation.rails') do |_, _, _, _, payload|

# Deprecation handling goes here

end

We define an allowed list of deprecation messages - deprecations that we don’t have to deal with at the moment and should be ignored.

The following table shows how our handling works:

| Environment | Allowed deprecation | Action |

|---|---|---|

| Production | Yes | Nothing |

| Production | No | Send event to Sentry |

| All other environments | Yes | Log the deprecation |

| All other environments | No | Raise it as an error |

With some simplifications, the code would look like this:

class DeprecationHandler

ALLOWED_LIST = [

/You should not do this/

]

def self.handle(payload)

allowed = ALLOWED_LIST.any? { |pattern| pattern.match?(payload[:message]) }

if Rails.env.production?

return if allowed

report_to_sentry(payload)

else

if allowed

Rails.logger.tagged('active_support', 'deprecation') do

Rails.logger.warn(payload[:message])

end

else

raise ActiveSupport::DeprecationException.new(payload[:message])

end

end

end

end

ActiveSupport::Notifications.subscribe('deprecation.rails') do |_, _, _, _, payload|

DeprecationHandler.handle(payload)

end

Given the above, most of the messages we had in our allowed list before we started the upgrade, were deprecations generated in our Rails 6.0 for items that would affect our target version 6.1.

...

/Initialization autoloaded the constants/,

/Class level methods will no longer inherit scoping from `/,

/update_attributes(!)? is deprecated and will be removed from Rails 6.1 \(please, use update(!)? instead\)/,

/ActionMailer::Base\.receive is deprecated and will be removed in Rails 6\.1\. Use Action Mailbox to process inbound email\./,

/ActionView::Base instances should be constructed with a lookup context, assignments, and a controller/,

/ActionView::Base instances must implement `compiled_method_container` or use the class method `with_empty_template_cache` for constructing an ActionView::Base instance that has an empty cache/,

/Rails 6\.1 will return Content-Type header without modification/,

/render file: should be given the absolute path to a file/,

/NOT conditions will no longer behave as NOR/,

...

So, before starting to check each one of the 1.5k failing specs mentioned in the previous section, we first worked on dealing with these deprecations. How?

For each deprecation:

- we created a branch from our

mainbranch - we removed the deprecation from the allowed list

- we ran the test suite on the branch and we located the parts that were generating the deprecations - remember that our deprecation handling raises errors for non-allowed messages

- engineers from each group prepared commits to the branch fixing the deprecations relevant to their team

- when the suite got green, we shipped it in production, and

- we checked our production monitoring system for deprecation events a.k.a. deprecations that occurred from code that was not fully tested

Note here that the changes were backwards compatible - fixes were merged in the main branch and not deferred to rails-upgrade-main for the final upgrade.

Working for the upgrade

After fixing all the deprecations for Rails 6.1, the test suite ended up failing with only 50 errors or so. Good news, right?

Well, now this is the most tricky part of the upgrade process. It’s the part in which we have to investigate and try to find out which changelog entry caused it in order to get a good understanding of what changed and how to fix it.

As previously noted, we can’t know exactly how a changelog entry actually affects the codebase and in many cases we will have to check the Rails PRs that have been merged to the new version in order to gather more information.

Also, note that some failures might actually happen due to a framework’s bug introduced in the new version, such as this one that we located in one of our specs and for which we opened a Rails PR upstream.

For each of the failing specs in our suite, we created a task in the tracking system and we assigned it to the proper contact person to either work on it or delegate it to one or more team members.

Normally, any work that has to be done from now on would be committed in the rails-upgrade-main branch.

The whole process might take some weeks or even months to complete and rebasing this branch to the main one should take place on a weekly basis if not more frequently.

To eliminate the effort of conflict resolution by rebasing to the main branch though, there are some things that we can do.

Backportable changes

There will be changes that will work both in the current and the target Rails version - these could and should be directly committed to the main branch.

For example, in one of our specs we made use of the last_migration method of ActiveRecord::MigrationContext which was removed in Rails 6.1 and we now had to calculate ourselves in Rails 6.1. Since the calculation would work in our main Rails version, we pushed the fix there instead of the rails-upgrade-main branch.

Non-backportable changes

For the rest of them, if a change is relatively small and contained, we can use a condition and alter the implementation based on it.

In a base module of the application we added the following helper methods:

module Skroutz

def self.rails_next_version

Gem::Version.new('7.0') # Your target version here

end

def self.rails_next?

Rails.gem_version >= rails_next_version

end

end

Then, when introducing a small change like the following, use the provided condition above to differentiate the behaviour.

Assume that there is a Rails framework method rails_method that returns a number in the current Rails version and we use it in a file that is frequently changed in the codebase:

class ClassWithManyChanges

def a_method

if Rails.rails_method(params) == 1

logger.info 'All good'

end

end

end

but in the next Rails version it returns a boolean instead of a number.

Instead of changing the condition to use true instead of 1 that would work only in the rails-upgrade-main branch

class ClassWithManyChanges

def a_method

if Rails.rails_method(params) == true

logger.info 'All good'

end

end

end

we can instead do the following and push it to the main branch.

class ClassWithManyChanges

def a_method

# TODO(rails6.1): Cleanup after upgrade

against_value = Skroutz.rails_next? ? true : 1

if Rails.rails_method(params) == against_value

logger.info 'All good'

end

end

end

This might seem a bit weird but besides saving time from conflicts upon rebase, it also works as a warning for engineers when they attempt to change a part in the main branch that has altered behaviour in the next Rails version.

Delivering the upgrade

At this point the suite is green and the most important milestone of the upgrade has completed successfully. Now, the target is to deliver it in a safe manner and without surprises. Well, at least with the least possible surprises :)

Sanity testing

It is very common in our field to test something in the development environment and find out that it’s working, write specs about it and they get green but once it goes live users see the 500 page as our new feature.

To eliminate these cases, having a staging environment that is very close to production is a saver.

Core testing

This is a list of items to test against the new framework version:

-

Migrations: we proceed to at least one ActiveRecord migration to make sure that everything works as expected and we review the generated changes in the schema.

-

Caching: it is very common when upgrading to a new version, to have failures when deserializing an object that was cached in the previous one. We must try to identify such cases and note them down in order to be prepared to clear the affected keys from the cache upon releasing the upgrade unless, like in our case, we can afford a whole cache clear.

-

Encryption: if we use Rails’ encryption (ex. encrypted cookies), we have to make sure that the decryption succeeds in the new version (and vice versa in case of a rollback).

- Integrations: the following checks should be also done (depending on the setup):

- rake: make sure that the application loads and the execution completes successfully for the most important tasks. In addition, if we are using libraries like

wheneverfor cron tasks, we should check that the generation of the crontab list succeeds and the result is identical to the previous version’s one. - Background jobs: in our setup, we use resque and kafka for background processing - we queued jobs to both and made sure that their execution completed with the desired results.

- Benchmarking: at this point, we have to monitor the performance of the application. We used StackProf along with flamegraphs and Ruby’s Benchmark module and compared the performance (memory usage and timings) of our most critical flows.

- Elasticsearch: index documents to ensure that new version changes to ActiveRecord models haven’t affected the generated JSON to be indexed on the server

- rake: make sure that the application loads and the execution completes successfully for the most important tasks. In addition, if we are using libraries like

- Traffic replay: we replay a large sample of production requests against the old and the new implementation and we verify that the results are identical

Application testing

We deployed the rails-upgrade-main branch to our staging environment and we requested from all product groups to perform manual tests at least for the most important flows of their domain. In our case, this step led to a couple of important bug fixes that would otherwise reach production.

Spread the news

We checked everything. We’re ready to move on.

Given that the deployment of the upgrade will require some time and that our engineering team counts more than 160 members, it is important to inform everyone about the release date a few days beforehand:

-

Product engineers: Teams with a tight schedule to release an important feature should not be informed about the upgrade at the last minute. We have to make sure that we will not block any important operations and we might even end up postponing the release for a few days if another milestone has a higher priority.

-

Platform engineers: Our platform team that is responsible for the infrastructure and the site reliability, also has to be informed soon enough to reserve the appropriate time to help us with the upgrade and its monitoring afterwards.

-

Contact persons: The Core team is the one to deploy the upgrade, though the Contact persons have to be available throughout the process to help, investigate and hotfix if something related to their domain comes up.

Deployment

We found the date. What are we going to do actually on that day?

Canary Release

Our setup consists of many servers grouped by their purpose:

- application servers: serving the application to our end users

- workers: executing the background jobs

- internal tools: serving parts of the monolith to internal users (ex. content editing, reporting…)

- etc

Instead of deploying the Rails upgrade to all of the servers at once, we follow the Canary Release technique. In a nutshell, with this approach the changes are deployed to a subset of the servers and in an order that will reduce the impact in case of failure.

In our case, it was obvious that we should start with the servers dedicated to our internal tools. This would help us get immediate feedback from our internal users and our monitoring system and also avoid causing unnecessary frustration to our end users. So we deployed the upgrade to one of the group’s servers, everything went well and we moved on deploying to the rest of them.

Even though the workers group seemed a good next candidate, we decided to deploy on it last because in case of failure, on top of resolving the error, we would have a big amount of operational work to do related to the failed jobs.

Create a detailed plan

As we described above, the deployment is a multistep process. It is extremely helpful to have a document with all the steps that we will need to follow on the release date.

We created a task in our tracking system in the milestone’s board in which we documented each specific deploy action along with notes, commands and resources (ex. monitoring links).

Here’s a sample:

1. Lock deploy

2. Merge `rails-upgrade-main` to `main`

3. Ensure successful build in Jenkins

4. Deploy to internals-1

Command: $ TARGET=internals-1 bundle exec deploy

Monitoring: https://monitoring/events?host=internals-1

5. Deploy to all internals

Command: $ TARGET=internals bundle exec deploy

Monitoring: https://monitoring/events?host=internals

...

10. Clear Rails.cache

Comand: $ Rails.cache.clear

...

Upon the deployment, we might end up executing different commands, add new steps etc. Updating the task with these changes will be valuable since we will revisit this task to create the next upgrade’s release plan.

Monitoring

We use Sentry for reporting exceptions in production and Grafana with a great amount of dashboards with configured alerts on most of them. Both tools send notifications to one or more Slack channels.

During the release of course, we were not waiting for notifications to appear in Slack - we had the critical dashboards opened in our browsers and we were checking their state constantly till the moment we felt confident that everything was fine.

After cross-checking with the Platform team that things look good on their side as well we considered the release successful!

No, not yet but close. We might have scheduled tasks that execute during the night or on specific days on a weekly basis so we have to remember to check the monitoring tools occasionally for errors that might be triggered by them until all of them complete successfully once after the upgrade.

Next steps

Rails 7 is around the corner and there are a couple of things we can do to be better prepared for the next upgrade.

– Gem updates: we can schedule more frequent updates of our gems (especially those required by or depending on Rails) saving time from the next upgrade milestone

– Deprecations: new deprecations appeared in the current version and we can already start working on them moving our codebase to a more compatible state for the next version

– Release information: we need to keep our eyes open for any major changes, new features etc. about the new release

Now we’re done :)

If you like providing a top-notch development environment or you get intrigued by working with Ruby & Rails, make sure to check our Core team’s open position, or our other job openings.

Thank you for reading!

PS: We forgot to acknowledge the upgrade’s coordinator.